The Executive Guide to Generative AI Integration

By Thayer Tate

Generative AI is changing enterprise systems faster than most organizations are prepared to operationalize. While much of the market remains focused on model capabilities, the larger challenge is no longer generating content at scale. It is determining how AI-generated outputs are validated, governed, and integrated into existing workflows.

Many organizations initially see rapid productivity gains from AI-enabled automation. Sales teams generate proposals faster, support teams respond more quickly, and marketing teams scale content production dramatically. But as adoption expands, a different bottleneck emerges: decision-making capacity struggles to keep pace with output generation.

For example, sales teams may begin generating AI-assisted proposals faster than legal or product teams can validate pricing, compliance language, or technical accuracy. Productivity rises locally while coordination complexity increases across the organization.

In enterprise environments, the success of generative AI integration depends less on the model itself and more on how effectively systems, workflows, and governance structures absorb AI-generated outputs.

What Generative AI Integration Actually Involves

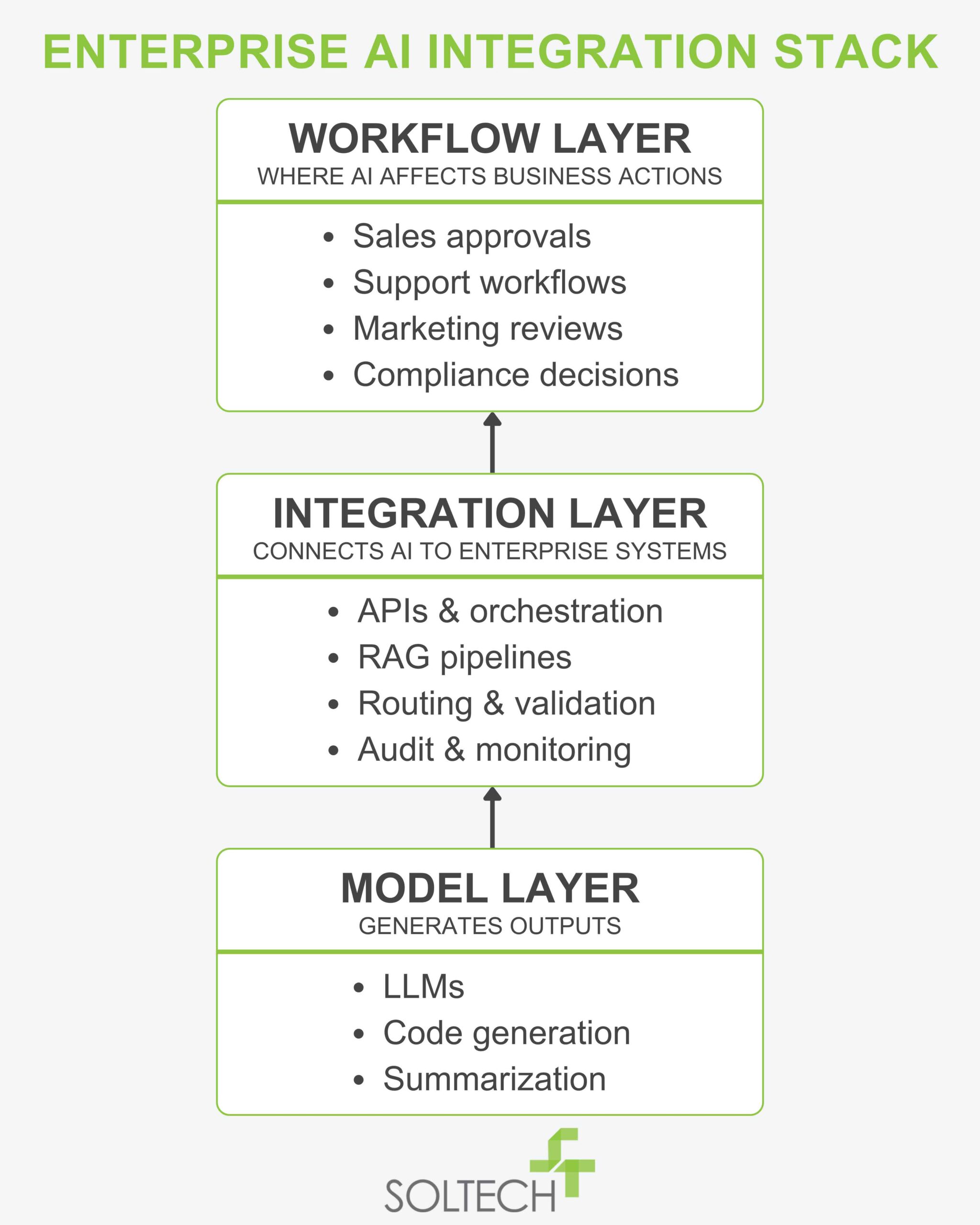

At an enterprise level, generative AI integration is not a single implementation step. It is the coordination of three distinct layers:

In practice, this often appears in retrieval-augmented generation (RAG) pipelines, where enterprise systems connect large language models to internal knowledge bases, APIs, and workflow engines. The challenge is rarely model capability alone. It is ensuring outputs remain traceable, governable, and reliable within existing business systems.

As orchestration layers mature, business logic increasingly shifts into prompts, routing rules, and AI coordination layers rather than traditional application code. This makes outputs harder to audit, reproduce, and govern over time.

When integration layers are treated as simple connectors, organizations lose visibility into how data is transformed before reaching the model. As adoption scales, this creates inconsistencies that become increasingly difficult to trace across teams and workflows.

The Shift from Data Bottlenecks to Decision Bottlenecks

In many organizations, early AI adoption focuses on improving access to information. Generative AI accelerates this quickly. Teams can generate summaries, drafts, and analyses with minimal effort.

However, as adoption grows, a different constraint emerges.

The limiting factor becomes not access to data, but the ability to validate and act on AI-generated outputs. In other words, output scales faster than decision capacity.

AI adoption often begins with localized efficiency gains. Marketing produces more content, sales generates proposals faster, and support teams respond more quickly. But as adoption expands, organizations encounter a different constraint: output scales faster than review, validation, and decision-making capacity.

Across enterprise AI initiatives, organizations consistently underestimate the complexity of aligning AI-generated outputs with existing approval processes, accountability structures, and operational constraints.

Review cycles expand as more content requires validation

- Different teams rely on different data sources, producing conflicting outputs

- Decision ownership becomes less clear when outputs are partially automated

- Execution slows as coordination increases

This is where business impact becomes visible. Faster production does not automatically translate to faster execution. Without alignment, organizations may see increased activity without a proportional increase in outcomes.

A Realistic System Evolution Scenario

A common pattern emerges as AI adoption expands across functions. Sales teams refine prompts to improve proposal quality while support teams independently modify workflows to handle escalation and compliance requirements. Initially, both systems improve local efficiency. Over time, however, inconsistencies emerge. Different workflows reference different product data, updates propagate unevenly across systems, and organizations become dependent on a small number of individuals who understand how prompts and orchestration layers behave.

Nothing has technically failed, but operational alignment begins to erode. In practice, this is where many enterprise AI initiatives become difficult to scale. The challenge is no longer model performance. It is maintaining consistency, governance, and decision clarity across interconnected systems.

Trade-offs That Influence Business and Technical Outcomes

Generative AI integration introduces choices that directly affect both system performance and business outcomes.

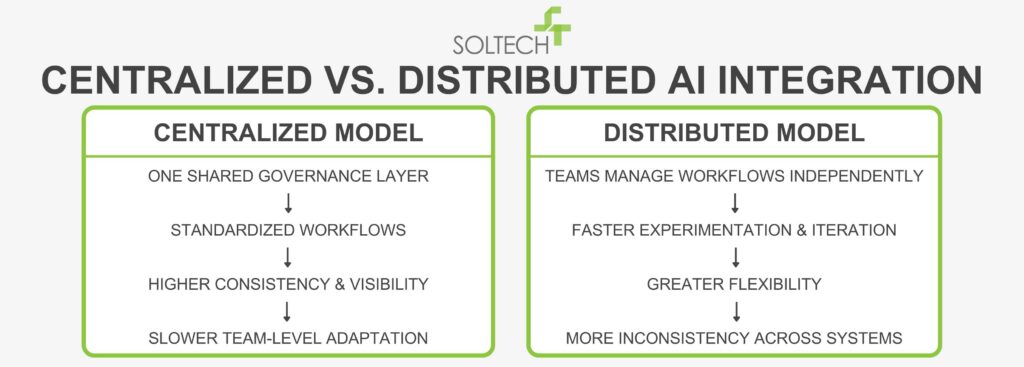

Centralization and Execution Speed

Most organizations underestimate how quickly distributed AI experimentation creates operational inconsistency. Teams optimize prompts and workflows locally, but without centralized governance, outputs drift across systems and become increasingly difficult to validate.

Most organizations initially optimize for speed and experimentation. Over time, however, governance, visibility, and workflow consistency become increasingly important as AI adoption expands across teams and systems.

Flexibility and Accountability

Flexible systems allow teams to quickly adapt prompts, models, and workflows. This supports rapid improvement but makes it harder to trace how outputs are generated.

More structured systems introduce versioning, validation layers, and defined ownership. These improve accountability but require more upfront design and cross-team coordination.

Most organizations initially optimize for speed of adoption and only later realize that governance, traceability, and workflow alignment determine whether AI systems remain sustainable at scale.

In practice, organizations rarely fail because models are incapable. They fail because governance, ownership, and workflow accountability were treated as secondary concerns during implementation.

External Capability and Internal Dependency

External models provide immediate access to advanced capabilities and continuous improvement. They reduce the need for internal model management.

At the same time, they introduce dependency on external providers for performance, pricing, and updates. Internal customization increases control, but shifts responsibility for maintenance and evolution.

These decisions directly influence long-term operating costs, vendor dependency, and the organization’s ability to adapt AI systems over time.

The Role and Limits of Generative AI Integration Services

Generative AI integration services can help organizations structure their initial approach. Many of the best AI consulting firms for generative AI integration bring experience in designing integration layers, governance models, and architectural patterns.

Their value is most visible in helping organizations avoid early fragmentation and establish a coherent foundation.

However, their effectiveness has a natural boundary.

External services can design systems, but they do not own how those systems are used. They cannot resolve unclear data ownership, fragmented workflows, or inconsistent decision-making processes. If those remain unaddressed, AI integration tends to amplify them.

This is why successful integration efforts typically combine external guidance with strong internal ownership of systems and workflows.

Designing Workflows That Can Absorb AI

The most meaningful gains from generative AI come when it is embedded into workflows in a way that reflects how decisions are actually made.

This requires attention to several operational factors:

Where AI Outputs Enter the System

AI-generated outputs can serve as drafts, recommendations, or decision inputs. Each role carries different expectations for accuracy and validation. Systems need to reflect these differences clearly.

Validation as a Designed Capacity

As output volume increases, validation becomes a constraint. Organizations that explicitly design for review capacity tend to maintain speed and quality. Those that do not often experience slower execution despite faster generation.

Governance as an Operational Requirement

Enterprise adoption increasingly requires:

- Versioning of prompts and model configurations

- Audit trails for how outputs are generated and used

- Clear ownership of AI-driven processes

These are not compliance add-ons. They are necessary for maintaining trust in system outputs.

As AI systems become embedded into operational workflows, organizations inherit risks that extend beyond hallucinations alone. Retrieval failures, prompt injection vulnerabilities, inconsistent model behavior, stale contextual data, and sensitive information exposure can all create downstream operational consequences. These issues often remain hidden during early adoption because systems may appear effective long before governance, traceability, and validation mechanisms mature.

Most organizations underestimate these risks during initial deployment because systems appear functional long before they become governable at scale.

Teams also encounter practical operational concerns that become more visible at scale: prompt versioning, evaluation consistency, latency tradeoffs, and cost management across multiple models and integrations.

In many cases, the long-term challenge is not deploying AI, but maintaining predictable behavior as systems evolve.

Feedback Loops and Continuous Alignment

Capturing how AI outputs are edited, approved, or rejected allows systems to improve over time. Without structured feedback, workflows evolve while models remain static, creating misalignment.

Recent Gartner research has similarly emphasized that long-term enterprise AI value depends more on operational integration and governance maturity than experimentation alone.

Integration as a Business and System Alignment Decision

Generative AI integration surfaces how well your systems are aligned. It highlights gaps in data consistency, workflow design, and decision ownership more quickly than traditional systems. Organizations that approach integration intentionally often use it to:

- Clarify data ownership across systems

- Standardize critical workflows without limiting flexibility

- Align outputs with how decisions are made

A balanced approach typically includes:

- Centralized governance for visibility and consistency

- Distributed flexibility within defined system boundaries

- Clear alignment between data sources, workflows, and decision points

As AI adoption expands across departments and workflows, organizations also face increasing complexity around governance, monitoring, and operational consistency. Systems that function well in isolated use cases often become harder to validate once outputs influence customer interactions, compliance processes, or cross-functional decision-making.

Governance therefore becomes a design consideration, not simply a compliance exercise.

What Generative AI Ultimately Reveals

Generative AI integration is best understood as a reflection of system maturity. It increases what your organization can produce, but it also exposes how well your systems coordinate, validate, and act on that output.

For both business and technical leadership, the implication is the same. The value of generative AI is not determined by model capability alone, but by how effectively it is integrated into the structure of your operations.

Organizations that focus on alignment tend to see improvements in execution speed, decision quality, and operational consistency. Those that do not often experience increased activity without corresponding gains in outcomes.

The organizations creating lasting value with generative AI will not necessarily be the fastest adopters. They will be the ones that integrate AI into workflows with clear accountability, operational discipline, and sustainable governance structures.

Most enterprise AI challenges are not model problems. They are systems-design problems.

FAQs

How do you integrate generative AI into enterprise workflows?

You don’t start with the model—you start with the workflow. Define where outputs enter the process, how they’re validated, and who owns the outcome. Integration is about how work moves, not just how systems connect.

How do generative AI integration services work?

They typically design and implement the infrastructure that connects models to enterprise systems. They’re useful early on, but long-term success depends on internal ownership of data, workflows, and governance.

How do you integrate generative AI models into existing systems?

Models are connected through APIs or middleware, but the critical work is defining how outputs are validated, versioned, and maintained within existing workflows. That’s where most implementations succeed or fail.

What is the primary ROI of generative AI integration?

The initial return comes from increased output speed. The long-term return depends on whether your system can validate and act on that output efficiently. Without that, increased speed creates more friction, not less.

Thayer Tate

Chief Technology Officer Thayer is the Chief Technology Officer at SOLTECH, bringing over 20 years of experience in technology and consulting to his role. Throughout his career, Thayer has focused on successfully implementing and delivering projects of all sizes. He began his journey in the technology industry with renowned consulting firms like PricewaterhouseCoopers and IBM, where he gained valuable insights into handling complex challenges faced by large enterprises and developed detailed implementation methodologies.

Thayer is the Chief Technology Officer at SOLTECH, bringing over 20 years of experience in technology and consulting to his role. Throughout his career, Thayer has focused on successfully implementing and delivering projects of all sizes. He began his journey in the technology industry with renowned consulting firms like PricewaterhouseCoopers and IBM, where he gained valuable insights into handling complex challenges faced by large enterprises and developed detailed implementation methodologies.

Thayer’s expertise expanded as he obtained his Project Management Professional (PMP) certification and joined SOLTECH, an Atlanta-based technology firm specializing in custom software development, Technology Consulting and IT staffing. During his tenure at SOLTECH, Thayer honed his skills by managing the design and development of numerous projects, eventually assuming executive responsibility for leading the technical direction of SOLTECH’s software solutions.

As a thought leader and industry expert, Thayer writes articles on technology strategy and planning, software development, project implementation, and technology integration. Thayer’s aim is to empower readers with practical insights and actionable advice based on his extensive experience.